eJournal: uffmm.org

ISSN 2567-6458, 2.April 22 – 3.April 2022

Email: info@uffmm.org

Author: Gerd Doeben-Henisch

Email: gerd@doeben-henisch.de

BLOG-CONTEXT

This post is part of the Philosophy of Science theme which is part of the uffmm blog.

PREFACE

In a preceding post I have illustrated how one can apply the concept of an empirical theory — highly inspired by Karl Popper — to an everyday problem given as a county and its demographic problem(s). In this post I like to develop this idea a little more.

AN EMPIRICAL THEORY AS A DEVELOPMENT PROCESS

CITIZENs – natural experts

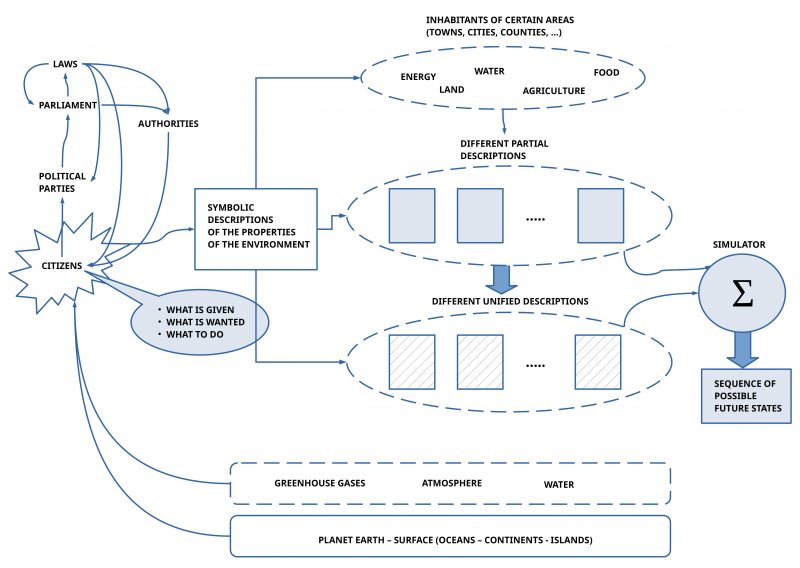

As starting point we assume citizens understood as our ‘natural experts’ being members of a democratic society with political parties, an freely elected parliament, which can create some helpful laws for the societal life and some authorities serving the need of the citizens.

SYMBOLIC DESCRIPTIONS

To coordinate their actions by a sufficient communication the citizens produce symbolic descriptions to make public how they see the ‘given situation’, which kinds of ‘future states’ (‘goals’) they want to achieve, and a list of ‘actions’ which can ‘change/ transform’ the given situation step wise into the envisioned future state.

LEVELS OF ABSTRACTIONS

Using an everyday language — possibly enriched with some math expressions – one can talk about our world of experience on different levels of abstraction. To get a rather wide scope one starts with most abstract concepts, and then one can break down these abstract concepts more and more with concrete properties/ features until these concrete expressions are ‘touching the real experience’. It can be helpful — in most cases — not to describe everything in one description but one does a partition of ‘the whole’ into several more concrete descriptions to get the main points. Afterwards it should be possible to ‘unify’ these more concrete descriptions into one large picture showing how all these concrete descriptions ‘work together’.

LOGICAL INFERENCE BY SIMULATION

A very useful property of empirical theories is the possibility to derive from given assumptions and assumed rules of inference possible consequences which are ‘true’ if the assumptions an the rules of inference are ‘true’.

The above outlined descriptions are seen in this post as texts which satisfy the requirements of an empirical theory such that the ‘simulator’ is able to derive from these assumptions all possible ‘true’ consequences if these assumptions are assumed to be ‘true’. Especially will the simulator deliver not only one single consequence only but a whole ‘sequence of consequences’ following each other in time.

PURE WWW KNOWLEDGE SPACE

This simple outline describes the application format of the oksimo software which is understood here as a kind of a ‘theory machine’ for everybody.

It is assumed that a symbolic description is given as a pure text file or as a given HTML page somewhere in the world wide web [WWW].

The simulator realized as an oksimo program can load such a file and can run a simulation. The output will be send back as an HTML page.

No special special data base is needed inside of the oksimo application. All oksimo related HTML pages located by a citizen somewhere in the WWW are constituting a ‘global public knowledge space’ accessible by everybody.

DISTRIBUTED OKSIMO INSTANCES

An oksimo server positioned behind the oksimo address ‘oksimo.com’ can produce for a simulation demand a ‘simulator instance’ running one simulation. There can be many simulations running in parallel. A simulation can also be connected in real time to Internet-of-Things [IoT] instances to receive empirical data being used in the simulation. In ‘interactive mode’ an oksimo simulation does furthermore allow the participation of ‘actors’ which function as a ‘dynamic rule instance’: they receive input from the simulated given situation and can respond ‘on their own’. This turns a simulation into an ‘open process’ like we do encounter during ‘everyday real processes’. An ‘actor’ must not necessarily be a ‘human’ actor; it can also be a ‘non-human’ actor. Furthermore it is possible to establish a ‘simulation-meta-level’: because a simulation as a whole represents a ‘full theory’ on can feed this whole theory to an ‘artificial intelligence algorithm’ which dos not run only one simulation but checks the space of ‘all possible simulations’ and thereby identifies those sub-spaces which are — according to the defined goals — ‘zones of special interest’.