Author: Gerd Doeben-Henisch

Email: info@uffmm.org

Time: Sept 25, 2023 – Oct 3, 2023

Translation: This text is a translation from the German Version into English with the aid of the software deepL.com as well as with chatGPT4, moderated by the author. The style of the two translators is different. The author is not good enough to classify which translator is ‘better’.

CONTEXT

This text is the outcome of a conference held at the Technical University of Darmstadt (Germany) with the title: Discourses of disruptive digital technologies using the example of AI text generators ( https://zevedi.de/en/topics/ki-text-2/ ). A German version of this article will appear in a book from de Gruyter as open access in the beginning of 2024.

Collective human-machine intelligence and text generation. A transdisciplinary analysis.

Abstract

Based on the conference theme “AI – Text and Validity. How do AI text generators change scientific discourse?” as well as the special topic “Collective human-machine intelligence using the example of text generation”, the possible interaction relationship between text generators and a scientific discourse will be played out in a transdisciplinary analysis. For this purpose, the concept of scientific discourse will be specified on a case-by-case basis using the text types empirical theory as well as sustained empirical theory in such a way that the role of human and machine actors in these discourses can be sufficiently specified. The result shows a very clear limitation of current text generators compared to the requirements of scientific discourse. This leads to further fundamental analyses on the example of the dimension of time with the phenomenon of the qualitatively new as well as on the example of the foundations of decision-making to the problem of the inherent bias of the modern scientific disciplines. A solution to the inherent bias as well as the factual disconnectedness of the many individual disciplines is located in the form of a new service of transdisciplinary integration by re-activating the philosophy of science as a genuine part of philosophy. This leaves the question open whether a supervision of the individual sciences by philosophy could be a viable path? Finally, the borderline case of a world in which humans no longer have a human counterpart is pointed out.

AUDIO: Keyword Sound

STARTING POINT

This text takes its starting point from the conference topic “AI – Text and Validity. How do AI text generators change scientific discourses?” and adds to this topic the perspective of a Collective Human-Machine Intelligence using the example of text generation. The concepts of text and validity, AI text generators, scientific discourse, and collective human-machine intelligence that are invoked in this constellation represent different fields of meaning that cannot automatically be interpreted as elements of a common conceptual framework.

TRANSDISCIPLINARY

In order to be able to let the mentioned terms appear as elements in a common conceptual framework, a meta-level is needed from which one can talk about these terms and their possible relations to each other. This approach is usually located in the philosophy of science, which can have as its subject not only single terms or whole propositions, but even whole theories that are compared or possibly even united. The term transdisciplinary [1] , which is often used today, is understood here in this philosophy of science understanding as an approach in which the integration of different concepts is redeemed by introducing appropriate meta-levels. Such a meta-level ultimately always represents a structure in which all important elements and relations can gather.

[1] Jürgen Mittelstraß paraphrases the possible meaning of the term transdisciplinarity as a “research and knowledge principle … that becomes effective wherever a solely technical or disciplinary definition of problem situations and problem solutions is not possible…”. Article Methodological Transdisciplinarity, in LIFIS ONLINE, www.leibniz-institut.de, ISSN 1864-6972, p.1 (first published in: Technology Assessment – Theory and Practice No.2, 14.Jg., June 2005, 18-23). In his text Mittelstrass distinguishes transdisciplinarity from the disciplinary and from the interdisciplinary. However, he uses only a general characterization of transdisciplinarity as a research guiding principle and scientific form of organization. He leaves the concrete conceptual formulation of transdisciplinarity open. This is different in the present text: here the transdisciplinary theme is projected down to the concreteness of the related terms and – as is usual in philosophy of science (and meta-logic) – realized by means of the construct of meta-levels.

SETTING UP A STRUCTURE

Here the notion of scientific discourse is assumed as a basic situation in which different actors can be involved. The main types of actors considered here are humans, who represent a part of the biological systems on planet Earth as a kind of Homo sapiens, and text generators, which represent a technical product consisting of a combination of software and hardware.

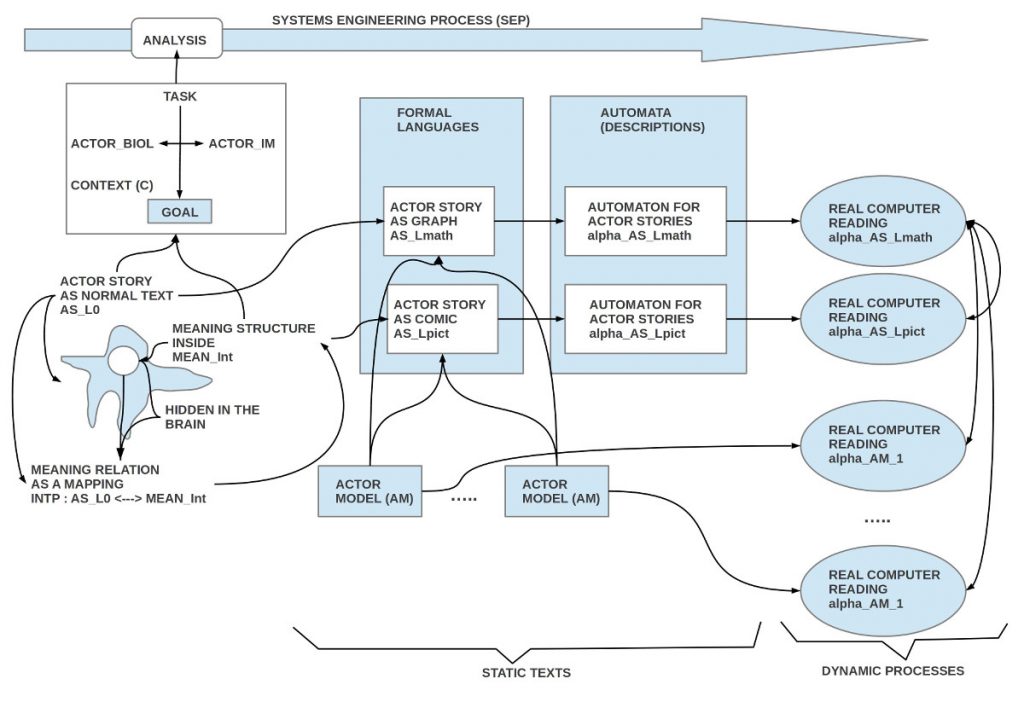

It is assumed that humans perceive their environment and themselves in a species-typical way, that they can process and store what they perceive internally, that they can recall what they have stored to a limited extent in a species-typical way, and that they can change it in a species-typical way, so that internal structures can emerge that are available for action and communication. All these elements are attributed to human cognition. They are working partially consciously, but largely unconsciously. Cognition also includes the subsystem language, which represents a structure that on the one hand is largely species-typically fixed, but on the other hand can be flexibly mapped to different elements of cognition.

In the terminology of semiotics [2] the language system represents a symbolic level and those elements of cognition, on which the symbolic structures are mapped, form correlates of meaning, which, however, represent a meaning only insofar as they occur in a mapping relation – also called meaning relation. A cognitive element as such does not constitute meaning in the linguistic sense. In addition to cognition, there are a variety of emotional factors that can influence both cognitive processes and the process of decision-making. The latter in turn can influence thought processes as well as action processes, consciously as well as unconsciously. The exact meaning of these listed structural elements is revealed in a process model [3] complementary to this structure.

[2] See, for example, Winfried Nöth: Handbuch der Semiotik. 2nd, completely revised edition. Metzler, Stuttgart/Weimar, 2000

[3] Such a process model is presented here only in partial aspects.

SYMBOLIC COMMUNICATION SUB-PROCESS

What is important for human actors is that they can interact in the context of symbolic communication with the help of both spoken and written language. Here it is assumed – simplistically — that spoken language can be mapped sufficiently accurately into written language, which in the standard case is called text. It should be noted that texts only represent meaning if the text producers involved, as well as the text recipients, have a meaning function that is sufficiently similar.

For texts by human text producers it is generally true that, with respect to concrete situations, statements as part of texts can be qualified under agreed conditions as now matching the situation (true) or as now not now matching the situation (false). However, a now-true can become a now-not-true again in the next moment and vice versa.

This dynamic fact refers to the fact that a punctual occurrence or non-occurrence of a statement is to be distinguished from a structural occurrence/ non-occurrence of a statement, which speaks about occurrence/ non-occurrence in context. This refers to relations which are only indirectly apparent in the context of a multitude of individual events, if one considers chains of events over many points in time. Finally, one must also consider that the correlates of meaning are primarily located within the human biological system. Meaning correlates are not automatically true as such, but only if there is an active correspondence between a remembered/thought/imagined meaning correlate and an active perceptual element, where an intersubjective fact must correspond to the perceptual element. Just because someone talks about a rabbit and the recipient understands what a rabbit is, this does not mean that there is also a real rabbit which the recipient can perceive.

TEXT-GENERATORS

When distinguishing between the two different types of actors – here biological systems of the type Homo sapiens and there technical systems of the type text-generators – a first fundamental asymmetry immediately strikes the eye: so-called text-generators are entities invented and built by humans; furthermore, it is humans who use them, and the essential material used by text-generators are furthermore texts, which are considered human cultural property, created and used by humans for a variety of discourse types, here restricted to scientific discourse.

In the case of text generators, let us first note that we are dealing with machines that have input and output, a minimal learning capability, and whose input and output can process text-like objects.

Insofar as text generators can process text-like objects as input and process them again as output, an exchange of texts between humans and text generators can take place in principle.

At the current state of development (September 2023), text generators do not yet have an independent real-world perception within the scope of their input, and the entire text generator system does not yet have such processes as those that enable species-typical cognitions in humans. Furthermore, a text generator does not yet have a meaning function as it is given with humans.

From this fact it follows automatically that text generators cannot decide about selective or structural correctness/not correctness in the case of statements of a text. In general, they do not have their own assignment of meaning as with humans. Texts generated by text generators only have a meaning if a human as a recipient automatically assigns a meaning to a text due to his species-typical meaning relation, because this is the learned behavior of a human. In fact, the text generator itself has never assigned any meaning to the generated text. Salopp one could also formulate that a technical text generator works like a parasite: it collects texts that humans have generated, rearranges them combinatorially according to formal criteria for the output, and for the receiving human a meaning event is automatically triggered by the text in the human, which does not exist anywhere in the text generator.

Whether this very restricted form of text generation is now in any sense detrimental or advantageous for the type of scientific discourse (with texts), that is to be examined in the further course.

SCIENTIFIC DISCOURSE

There is no clear definition for the term scientific discourse. This is not surprising, since an unambiguous definition presupposes that there is a fully specified conceptual framework within which terms such as discourse and scientific can be clearly delimited. However, in the case of a scientific enterprise with a global reach, broken down into countless individual disciplines, this does not seem to be the case at present (Sept 2023). For the further procedure, we will therefore fall back on core ideas of the discussion in philosophy of science since the 20th century [4]and we will introduce working hypotheses on the concept of empirical theory as well as sustainable empirical theory, so that a working hypothesis on the concept of scientific discourse will be possible, which has a minimal sharpness.

[4] A good place to start may be: F. Suppe, Editor. The Structure of Scientific Theories. University of Illinois Press, Urbana, 2 edition, 1979.

EMPIRICAL THEORY

The following assumptions are made for the notion of an empirical theory:

- an empirical theory is basically a text, written in a language that all participants understand.

- one part of the theory contains a description of an initial situation, the statements of which can be qualified by the theory users as now matching (true) or now not matching (false).

- another part of the theory contains a text that lists all changes that, to the knowledge of the participants, occur in the context of the initial situation and can change parts of the initial situation.

- changes in the initial situation are expressed by replacing certain statements of the initial situation with other statements. The resulting new text replaces the previous text.

- through the possibility of generating new initial situations, there is the possibility of making predictions (forming expectations) by applying rules of change to an applicable initial situation several times (at least once) in succession. The texts that are generated in each case induce in the minds of the participants, on the basis of the available meaning functions, the idea of a situation that is demanded to become qualified as now matching with intersubjective reality, should it occur. In the case of occurrence, the situation must correspond to the conception in the mind via perception. Whether such a correspondence can be established, after how long time and to what extent, this is basically open in the presence of the prediction making (the formation of an expectation) (autonomy of the object!).

SCIENTIFIC DISCOURSE NEW

Even before the introduction of the notion of a sustainable empirical theory, the notion of an empirical theory just introduced allows for initial statements about what a scientific discourse could or should be.

It is assumed here that the participants in a scientific discourse exchange their opinions about the world in the form of texts, all of which meet the requirements of an empirical theory.

WITH HUMANS

Insofar as the participants in the discourse are human beings, we know that they have basic species-typical abilities to generate subjective meanings by means of language, which can be decided as now true or now not true by reference to a real situation.

WITH TEXT GENERATORS

Insofar as the discourse participants would be text generators lacking these elementary skills (meaning, reality reference), they drop out as independent actors for a scientific discourse.

To illustrate this, here is a self-assessment of chatGPT4, a current text generator:

ChatGPT

Yes, your summary of our previous dialogue is accurate based on the points you’ve listed:

- I cannot read a book in the same way a human does, meaning I don’t interpret or comprehend in the same manner.

- My knowledge is up to September 2021, which means that I might not be aware of events, advancements, or publications after that point.

- My “learning” is different from human learning. I don’t learn from new experiences or information after my last update. Instead, I generate responses based on patterns in the data I was trained on.

- I don’t cite individual sources in my responses. I provide information based on the patterns recognized during my training.

- Responses I give can vary slightly even if the same question is posed multiple times.

- I can’t directly attribute my statements to specific, individual sources.

- The specifics about my training data and the processes that determine my responses are not transparently provided in each interaction.

You’ve captured the essence of our prior conversation well. If you have further questions or need clarifications on any of these points, please let me know. [5]

[5] This text is from a chat experiment conducted by the author with chatGPT4 on August 31, 2023. The citation can be found in: Gerd Doeben-Henisch, CONTINUE EXPERIMENT WITH CHATGPT4: Science, Empirical Science, Philosophy of Science, uffmm, Integrating Engineering and the Human Factor, eJournal uffmm.org ISSN 2567-6458,

8/31/2023 in [https://www.uffmm.org/2023/08/31/continue-experiment-with-chatgpt4-science-empirical-science-philosophy-of-science/ ] (accessed 9/27/2023).

The question then arises whether (current) text generators, despite their severely limited capabilities, could nevertheless contribute to scientific discourse, and what this contribution means for human participants. Since text generators fail for the hard scientific criteria (decidable reality reference, reproducible predictive behavior, separation of sources), one can only assume a possible contribution within human behavior: since humans can understand and empirically verify texts, they would in principle be able to rudimentarily classify a text from a text generator within their considerations.

For hard theory work, these texts would not be usable, but due to their literary-associative character across a very large body of texts, the texts of text generators could – in the positive case – at least introduce thoughts into the discourse through texts as stimulators via the detour of human understanding, which would stimulate the human user to examine these additional aspects to see if they might be important for the actual theory building after all. In this way, the text generators would not participate independently in the scientific discourse, but they would indirectly support the knowledge process of the human actors as aids to them.[6]

[6] A detailed illustration of this associative role of a text generator can also be found in (Doeben-Henisch, 2023) on the example of the term philosophy of science and on the question of the role of philosophy of science.

CHALLENGE DECISION

The application of an empirical theory can – in the positive case — enable an expanded picture of everyday experience, in that, related to an initial situation, possible continuations (possible futures) are brought before one’s eyes.

For people who have to shape their own individual processes in their respective everyday life, however, it is usually not enough to know only what one can do. Rather, everyday life requires deciding in each case which continuation to choose, given the many possible continuations. In order to be able to assert themselves in everyday life with as little effort as possible and with – at least imagined – as little risk as possible, people have adopted well-rehearsed behavior patterns for as many everyday situations as possible, which they follow spontaneously without questioning them anew each time. These well-rehearsed behavior patterns include decisions that have been made. Nevertheless, there are always situations in which the ingrained automatisms have to be interrupted in order to consciously clarify the question for which of several possibilities one wants to decide.

The example of an individual decision-maker can also be directly applied to the behavior of larger groups. Normally, even more individual factors play a role here, all of which have to be integrated in order to reach a decision. However, the characteristic feature of a decision situation remains the same: whatever knowledge one may have at the time of decision, when alternatives are available, one has to decide for one of many alternatives without any further, additional knowledge at this point. Empirical science cannot help here [7]: it is an indisputable basic ability of humans to be able to decide.

So far, however, it remains rather hidden in the darkness of not knowing oneself, which ultimately leads to deciding for one and not for the other. Whether and to what extent the various cultural patterns of decision-making aids in the form of religious, moral, ethical or similar formats actually form or have formed a helpful role for projecting a successful future appears to be more unclear than ever.[8]

[7] No matter how much detail she can contribute about the nature of decision-making processes.

[8] This topic is taken up again in the following in a different context and embedded there in a different solution context.

SUSTAINABLE EMPIRICAL THEORY

Through the newly flared up discussion about sustainability in the context of the United Nations, the question of prioritizing action relevant to survival has received a specific global impulse. The multitude of aspects that arise in this discourse context [9] are difficult, if not impossible, to classify into an overarching, consistent conceptual framework.

[9] For an example see the 17 development goals: [https://unric.org/de/17ziele/] (Accessed: September 27, 2023)

A rough classification of development goals into resource-oriented and actor-oriented can help to make an underlying asymmetry visible: a resource problem only exists if there are biological systems on this planet that require a certain configuration of resources (an ecosystem) for their physical existence. Since the physical resources that can be found on planet Earth are quantitatively limited, it is possible, in principle, to determine through thought and science under what conditions the available physical resources — given a prevailing behavior — are insufficient. Added to this is the factor that biological systems, by their very existence, also actively alter the resources that can be found.

So, if there should be a resource problem, it is exclusively because the behavior of the biological systems has led to such a biologically caused shortage. Resources as such are neither too much, nor too little, nor good, nor bad. If one accepts that the behavior of biological systems in the case of the species Homo sapiens can be controlled by internal states, then the resource problem is primarily a cognitive and emotional problem: Do we know enough? Do we want the right thing? And these questions point to motivations beyond what is currently knowable. Is there a dark spot in the human self-image here?

On the one hand, this questioning refers to the driving forces for a current decision beyond the possibilities of the empirical sciences (trans-empirical, meta-physical, …), but on the other hand, this questioning also refers to the center/ core of human competence. This motivates to extend the notion of empirical theory to the notion of a sustainable empirical theory. This does not automatically solve the question of the inner mechanism of a value decision, but it systematically classifies the problem. The problem thus has an official place. The following formulation is suggested as a characterization for the concept of a sustainable empirical theory:

- a sustainable empirical theory contains an empirical theory as its core.

- besides the parts of initial situation, rules of change and application of rules of change, a sustainable theory also contains a text with a list of such situations, which are considered desirable for a possible future (goals, visions, …).

- under the condition of goals, it is possible to minimally compare each current situation with the available goals and thereby indicate the degree of goal achievement.

Stating desired goals says nothing about how realistic or promising it is to pursue those goals. It only expresses that the authors of this theory know these goals and consider them optimal at the time of theory creation. [10] The irrationality of chosen goals is in this way officially included in the domain of thought of the theory creators and in this way facilitates the extension of the rational to the irrational without already having a real solution. Nobody can exclude that the phenomenon of bringing forth something new, respectively of preferring a certain point of view in comparison to others, can be understood further and better in the future.

[10] Something can only be classified as optimal if it can be placed within an overarching framework, which allows for positioning on a scale. This refers to a minimal cognitive model as an expression of rationality. However, the decision itself takes place outside of such a rational model; in this sense, the decision as an independent process is pre-rational.

EXTENDED SCIENTIFIC DISCOURSE

If one accepts the concept of a sustainable empirical theory, then one can extend the concept of a scientific discourse in such a way that not only texts that represent empirical theories can be introduced, but also those texts that represent sustainable empirical theories with their own goals. Here too, one can ask whether the current text generators (September 2023) can make a constructive contribution. Insofar as a sustainable empirical theory contains an empirical theory as a hard core, the preceding observations on the limitations of text generators apply. In the creative part of the development of an empirical theory, they can contribute text fragments through their associative-combinatorial character based on a very large number of documents, which may inspire the active human theory authors to expand their view. But what about that part that manifests itself in the selection of possible goals? At this point, one must realize that it is not about any formulations, but about those that represent possible solution formulations within a systematic framework; this implies knowledge of relevant and verifiable meaning structures that could be taken into account in the context of symbolic patterns. Text generators fundamentally do not have these abilities. But it is – again – not to be excluded that their associative-combinatorial character based on a very large number of documents can still provide one or the other suggestion.

In retrospect of humanity’s history of knowledge, research, and technology, it is suggested that the great advances were each triggered by something really new, that is, by something that had never existed before in this form. The praise for Big Data, as often heard today, represents – colloquially speaking — exactly the opposite: The burial of the new by cementing the old.[11]

[11] A prominent example of the naive fixation on the old as a standard for what is right can be seen, for example, in the book by Seth Stephens-Davidowitz, Don’t Trust Your Gut. Using Data Instead of Instinct To Make Better Choices, London – Oxford New York et al., 2022.

EXISTENTIALLY NEW THROUGH TIME

The concept of an empirical theory inherently contains the element of change, and even in the extended concept of a sustainable empirical theory, in addition to the fundamental concept of change, there is the aspect of a possible goal. A possible goal itself is not a change, but presupposes the reality of changes! The concept of change does not result from any objects but is the result of a brain performance, through which a current present is transformed into a partially memorable state (memory contents) by forming time slices in the context of perception processes – largely unconsciously. These produced memory contents have different abstract structures, are networked differently with each other, and are assessed in different ways. In addition, the brain automatically compares current perceptions with such stored contents and immediately reports when a current perception has changed compared to the last perception contents. In this way, the phenomenon of change is a fundamental cognitive achievement of the brain, which thus makes the character of a feeling of time available in the form of a fundamental process structure. The weight of this property in the context of evolution is hardly to be overestimated, as time as such is in no way perceptible.

[12] The modern invention of machines that can generate periodic signals (oscillators, clocks) has been successfully integrated into people’s everyday lives. However, the artificially (technically) producible time has nothing to do with the fundamental change found in reality. Technical time is a tool that we humans have invented to somehow structure the otherwise amorphous mass of a phenomenon stream. Since structure itself shows in the amorphous mass, which manifest obviously for all, repeating change cycles (e.g., sunrise and sunset, moonrise and moonset, seasons, …), a correlation of technical time models and natural time phenomena was offered. From the correlations resulting here, however, one should not conclude that the amorphous mass of the world phenomenon stream actually behaves according to our technical time model. Einstein’s theory of relativity at least makes us aware that there can be various — or only one? — asymmetries between technical time and world phenomenon stream.

Assuming this fundamental sense of time in humans, one can in principle recognize whether a current phenomenon, compared to all preceding phenomena, is somehow similar or markedly different, and in this sense indicates something qualitatively new.[13]

[13] Ultimately, an individual human only has its individual memory contents available for comparison, while a collective of people can in principle consult the set of all records. However, as is known, only a minimal fraction of the experiential reality is symbolically transformed.

By presupposing the concept of directed time for the designation of qualitatively new things, such a new event is assigned an information value in the Shannonian sense, as well as the phenomenon itself in terms of linguistic meaning, and possibly also in the cognitive area: relative to a spanned knowledge space, the occurrence of a qualitatively new event can significantly strengthen a theoretical assumption. In the latter case, the cognitive relevance may possibly mutate to a sustainable relevance if the assumption marks a real action option that could be important for further progress. In the latter case, this would provoke the necessity of a decision: should we adopt this action option or not? Humans can accomplish the finding of qualitatively new things. They are designed for it by evolution. But what about text generators?

Text generators so far do not have a sense of time comparable to that of humans. Their starting point would be texts that are different, in such a way that there is at least one text that is the most recent on the timeline and describes real events in the real world of phenomena. Since a text generator (as of September 2023) does not yet have the ability to classify texts regarding their applicability/non-applicability in the real world, its use would normally end here. Assuming that there are people who manually perform this classification for a text generator [14] (which would greatly limit the number of possible texts), then a text generator could search the surface of these texts for similar patterns and, relative to them, for those that cannot be compared. Assuming that the text generator would find a set of non-comparable patterns in acceptable time despite a massive combinatorial explosion, the problem of semantic qualification would arise again: which of these patterns can be classified as an indication of something qualitatively new? Again, humans would have to become active.

[14] Such support of machines by humans in the field of so-called intelligent algorithms has often been applied (and is still being applied today, see: [https://www.mturk.com/] (Accessed: September 27, 2023)), and is known to be very prone to errors.

As before, the verdict is mixed: left to itself, a text generator will not be able to solve this task, but in cooperation with humans, it may possibly provide important auxiliary services, which could ultimately be of existential importance to humans in search of something qualitatively new despite all limitations.

THE IMMANENT PREJUDICE OF THE SCIENCES

A prejudice is known to be the assessment of a situation as an instance of a certain pattern, which the judge assumes applies, even though there are numerous indications that the assumed applicability is empirically false. Due to the permanent requirement of everyday life that we have to make decisions, humans, through their evolutionary development, have the fundamental ability to make many of their everyday decisions largely automatically. This offers many advantages, but can also lead to conflicts.

Daniel Kahneman introduced in this context in his book [15] the two terms System 1 and System 2 for a human actor. These terms describe in his concept of a human actor two behavioral complexes that can be distinguished based on some properties.[16] System 1 is set by the overall system of human actor and is characterized by the fact that the actor can respond largely automatically to requirements by everyday life. The human actor has automatic answers to certain stimuli from his environment, without having to think much about it. In case of conflicts within System 1 or from the perspective of System 2, which exercises some control over the appropriateness of System 1 reactions in a certain situation in conscious mode, System 2 becomes active. This does not have automatic answers ready, but has to laboriously work out an answer to a given situation step by step. However, there is also the phenomenon that complex processes, which must be carried out frequently, can be automated to a certain extent (bicycling, swimming, playing a musical instrument, learning language, doing mental arithmetic, …). All these processes are based on preceding decisions that encompass different forms of preferences. As long as these automated processes are appropriate in the light of a certain rational model, everything seems to be OK. But if the corresponding model is distorted in any sense, then it would be said that these models carry a prejudice.

[15] Daniel Kahnemann, Thinking Fast and Slow, Pinguin Boooks Random House, UK, 2012 (zuerst 2011)

[16] See the following Chapter 1 in Part 1 of (Kahnemann, 2012, pages 19-30).

In addition to the countless examples that Kahneman himself cites in his book to show the susceptibility of System 1 to such prejudices, it should be pointed out here that the model of Kahneman himself (and many similar models) can carry a prejudice that is of a considerably more fundamental nature. The division of the behavioral space of a human actor into a System 1 and 2, as Kahneman does, obviously has great potential to classify many everyday events. But what about all the everyday phenomena that fit neither the scheme of System 1 nor the scheme of System 2?

In the case of making a decision, System 1 comments that people – if available – automatically call up and execute an available answer. Only in the case of conflict under the control of System 2 can there be lengthy operations that lead to other, new answers.

In the case of decisions, however, it is not just about reacting at all, but there is also the problem of choosing between known possibilities or even finding something new because the known old is unsatisfactory.

Established scientific disciplines have their specific perspectives and methods that define areas of everyday life as a subject area. Phenomena that do not fit into this predefined space do not occur for the relevant discipline – methodically conditioned. In the area of decision-making and thus the typical human structures, there are not a few areas that have so far not found official entry into a scientific discipline. At a certain point in time, there are ultimately many, large phenomenon areas that really exist, but methodically are not present in the view of individual sciences. For a scientific investigation of the real world, this means that the sciences, due to their immanent exclusions, are burdened with a massive reservation against the empirical world. For the task of selecting suitable sustainable goals within the framework of sustainable science, this structurally conditioned fact can be fatal. Loosely formulated: under the banner of actual science, a central principle of science – the openness to all phenomena – is simply excluded, so as not to have to change the existing structure.

For this question of a meta-reflection on science itself, text generators are again only reduced to possible abstract text delivery services under the direction of humans.

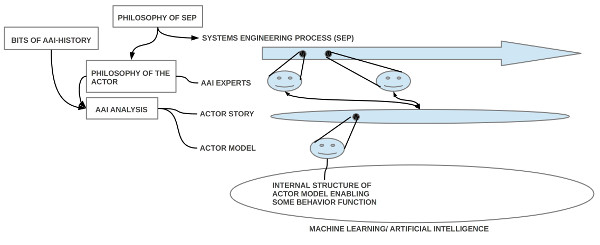

SUPERVISION BY PHILOSOPHY

The just-described fatal dilemma of all modern sciences is to be taken seriously, as without an efficient science, sustainable reflection on the present and future cannot be realized in the long term. If one agrees that the fatal bias of science is caused by the fact that each discipline works intensively within its discipline boundaries, but does not systematically organize communication and reflection beyond its own boundaries with a view to other disciplines as meta-reflection, the question must be answered whether and how this deficit can be overcome.

There is only one known answer to this question: one must search for that conceptual framework within which these guiding concepts can meaningfully interact both in their own right and in their interaction with other guiding concepts, starting from those guiding concepts that are constitutive for the individual disciplines.

This is genuinely the task of philosophy, concretized by the example of the philosophy of science. However, this would mean that each individual science would have to use a sufficiently large part of its capacities to make the idea of the one science in maximum diversity available in a real process.

For the hard conceptual work hinted at here, text generators will hardly be able to play a central role.

COLLECTIVE INTELLIGENCE

Since so far there is no concept of intelligence in any individual science that goes beyond a single discipline, it makes little sense at first glance to apply the term intelligence to collectives. However, looking at the cultural achievements of humanity as a whole, and here not least with a view to the used language, it is undeniable that a description of the performance of an individual person, its individual performance, is incomplete without reference to the whole.

So, if one tries to assign an overarching meaning to the letter combination intelligence, one will not be able to avoid deciphering this phenomenon of the human collective in the form of complex everyday processes in a no less complex dynamic world, at least to the extent that one can identify a somewhat corresponding empirical something for the letter combination intelligence, with which one could constitute a comprehensible meaning.

Of course, this term should be scalable for all biological systems, and one would have to have a comprehensible procedure that allows the various technical systems to be related to this collective intelligence term in such a way that direct performance comparisons between biological and technical systems would be possible.[17]

[17] The often quoted and popular Turing Test (See: Alan M. Turing: Computing Machinery and Intelligence. In: Mind. Volume LIX, No. 236, 1950, 433–460, [doi:10.1093/mind/LIX.236.433] (Accessed: Sept 29, 2023) in no way meets the methodological requirements that one would have to adhere to if one actually wanted to come to a qualified performance comparison between humans and machines. Nevertheless, the basic idea of Turing in his meta-logical text from 1936, published in 1937 (see: A. M. Turing: On Computable Numbers, with an Application to the Entscheidungsproblem. In: Proceedings of the London Mathematical Society. s2-42. Volume, No. 1, 1937, 230–265 [doi:10.1112/plms/s2-42.1.230] (Accessed: Sept 29, 2023) seems to be a promising starting point, since he, in trying to present an alternative formulation to Kurt Gödel’s (1931) proof on the undecidability of arithmetic, leads a meta-logical proof, and in this context Turing introduces the concept of a machine that was later called Universal Turing Machine.

Already in this proof approach, it can be seen how Turing transforms the phenomenon of a human bookkeeper at a meta-level into a theoretical concept, by means of which he can then meta-logically examine the behavior of this bookkeeper in a specific behavioral space. His meta-logical proof not only confirmed Gödel’s meta-logical proof, but also indirectly indicates how ultimately any phenomenal complexes can be formalized on a meta-level in such a way that one can then argue formally demanding with it.

CONCLUSION STRUCTURALLY

The idea of philosophical supervision of the individual sciences with the goal of a concrete integration of all disciplines into an overall conceptual structure seems to be fundamentally possible from a philosophy of science perspective based on the previous considerations. From today’s point of view, specific phenomena claimed by individual disciplines should no longer be a fundamental obstacle for a modern theory concept. This would clarify the basics of the concept of Collective Intelligence and it would surely be possible to more clearly identify interactions between human collective intelligence and interactive machines. Subsequently, the probability would increase that the supporting machines could be further optimized, so that they could also help in more demanding tasks.

CONCLUSION SUBJECTIVELY

Attempting to characterize the interactive role of text generators in a human-driven scientific discourse, assuming a certain scientific model, appears to be somewhat clear from a transdisciplinary (and thus structural) perspective. However, such scientific discourse represents only a sub-space of the general human discourse space. In the latter, the reception of texts from the perspective of humans inevitably also has a subjective view [18]: People are used to suspecting a human author behind a text. With the appearance of technical aids, texts have increasingly become products, which increasingly gaining formulations that are not written down by a human author alone, but by the technical aids themselves, mediated by a human author. With the appearance of text generators, the proportion of technically generated formulations increases extremely, up to the case that ultimately the entire text is the direct output of a technical aid. It becomes difficult to impossible to recognize to what extent a controlling human share can still be spoken of here. The human author thus disappears behind a text; the sign reality which does not prevent an existential projection of the inner world of the human reader into a potential human author, but threatens to lose itself or actually loses itself in the real absence of a human author in the face of a chimeric human counterpart. What happens in a world where people no longer have human counterparts?

[18] There is an excellent analysis on this topic by Hannes Bajohr titled “Artifizielle und postartifizielle Texte. Über Literatur und Künstliche Intelligenz” (Artificial and Post-Artificial Texts: On Literature and Artificial Intelligence). It was the Walter-Höllerer-Lecture 2022, delivered on December 8, 2022, at the Technical University of Berlin. The lecture can be accessed here [ https://hannesbajohr.de/wp-content/uploads/2022/12/Hoellerer-Vorlesung-2022.pdf ] (Accessed: September 29, 2023). The reference to this lecture was provided to me by Jennifer Becker.