Author: Gerd Doeben-Henisch

Changelog: March 2, 2025 – March 2, 2025

Email: info@uffmm.org

TRANSLATION: The following text is a translation from a German version into English. For the translation I am using the software @chatGPT4o with manual modifications.

CONTENT TREE

This text is part of the TOPIC Philosophy of Science.

CONTEXT

This is not another interim reflection but a continuation in the main thread of the text project ‘What is Life?’

MAIN THREADS: What is Life?

- Jan 17, 2025 : “WHAT IS LIFE? WHAT ROLE DO WE PLAY? IST THERE A FUTURE?”

- Jan 18, 2025 : “WHAT IS LIFE? … DEMOCRACY – CITIZENS”

- Jan 21, 2025 : WHAT IS LIFE? … PHILOSOPHY OF LIFE

- Feb 10, 2025 : WHAT IS LIFE? … If life is ‘More,’ ‘much more’ …

INSERTIONS SO FAR:

- Feb 15, 2025 : INSERTION: A Brief History of the Concept of Intelligence and Its Future

- Feb 18, 2025 : INSERTION: BIOLOGICAL INTELLIGENCE NEEDS LEARNING. Structural Analysis

- Feb 20, 2025 : INSERTION : INTELLIGENCE – LEARNING – KNOWLEDGE – MATERIAL CONDITIONS; AI

TRANSITION

In text No. 4, “WHAT IS LIFE? … When Life is ‘More,’ ‘Much More’ …”, there is a central passage that should be recalled here. Following the revelation of the empirically strong acceleration in the development of complexity of life on this planet, it states:

“The curve tells the ‘historical reality’ that ‘classical biological systems’ up to Homo sapiens were able to generate with their ‘previous means.’ However, with the emergence of the ‘Homo’ type, and especially with the life form ‘Homo sapiens,’ entirely new properties come into play. With the sub-population of Homo sapiens, there is a life form that, through its ‘cognitive’ dimension and its novel ‘symbolic communication,’ can generate the foundations for action at an extremely faster and more complex level.”

Following this “overall picture,” much suggests that the emergence of Homo sapiens (that is, us) after approximately 3.5 billion years of evolution, preceded by about 400 million years of molecular development, does not occur randomly. It is hard to overlook that the emergence of Homo sapiens lies almost at the “center of the developmental trajectory.” This fact can—or must?—raise the question of whether a “special responsibility” for Homo sapiens derives from this, concerning the “future of all life” on this planet—or even beyond? This leads to the second quotation from text No. 4:

“How can a ‘responsibility for global life’ be understood by us humans, let alone practically implemented by individual human beings? How should humans, who currently live approximately 60–120 years, think about a development that must be projected millions or even more years into the future?”

Such “responsibility with a view toward the future” would—from the perspective of life as a whole—only make sense if Homo sapiens were indeed the “only currently existing life form” that possesses exactly those characteristics required for “assuming responsibility” in this current phase of life’s development.

PRELIMINARY NOTE

The following text will gradually explain how all these elements are interconnected. At this stage, references to relevant literature will be kept to a minimum, as each section would otherwise require countless citations. Nevertheless, occasional remarks will be made. If the perspective presented in the “What is Life” texts proves fundamentally viable, it would need to be further refined and embedded into current specialized knowledge in a subsequent iteration. This process could involve contributions from various perspectives. For now, the focus is solely on developing a new, complex working hypothesis, grounded in existing knowledge.

THE HOMO SAPIENS EVENT

In modern science fiction novels and films, extraterrestrials are a popular device used to introduce something extraordinary to planet Earth—whether futuristic advancements or adventurous developments from the future appearing on Earth. Of course, these are thought constructs, through which we humans tell ourselves stories, as storytelling has been an essential part of human culture since the very beginning.

Against this backdrop, it is remarkable that the Homo Sapiens Event (HSE) has not yet received a comparable level of empathic attention. Yet, the HSE possesses all the ingredients to surpass even the boldest science fiction novels and films known to us. The developmental timeline on planet Earth alone spans approximately 3.9 billion years.

If we open ourselves to the idea that the biological might be understood as the direct unfolding of properties inherently present in the non-biological—and thus ultimately in energy itself, from which the entire known universe emerged—then we are dealing with a maximal event whose roots are as old as the known universe.

Ultimately—since energy remains more unknown than known to us—the HSE, as a property of energy, could even be older than the known universe itself.

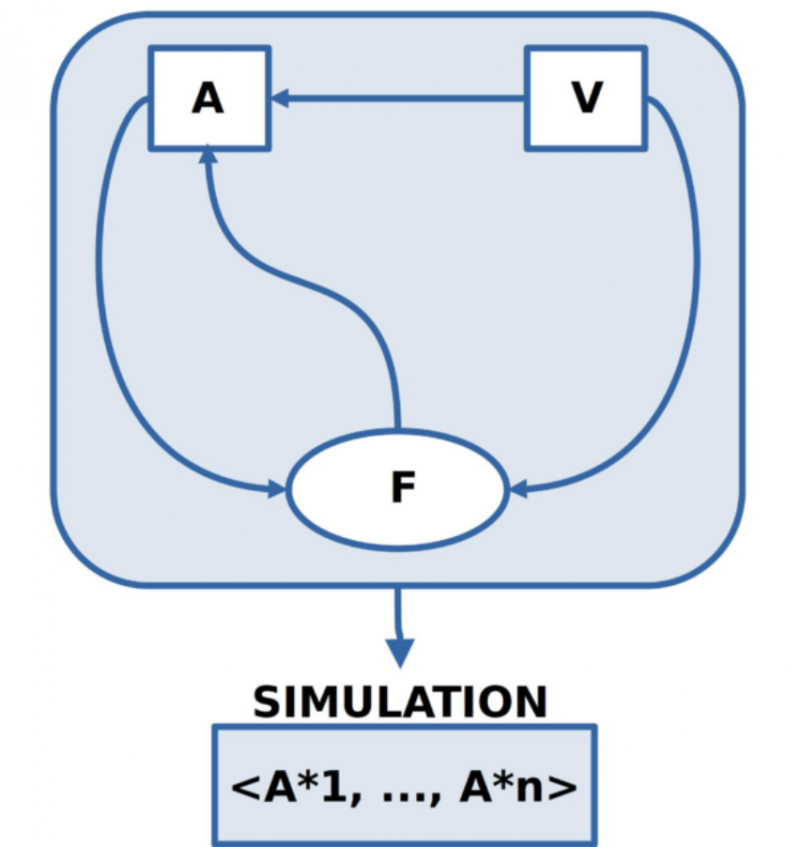

IMAGE 1: Homo Sapiens Event (HSE)

PHILOSOPHICAL APPROACH

In this text, the Homo Sapiens Event (HSE) is discussed or written about because this is the only way in which the author’s brain can exchange thoughts with the brains of readers. This means that—regardless of the content—without some form of communication, there can be no exchange between different brains.

For Homo sapiens, such communication has, from the very beginning, occurred through a symbolic language, embedded in a variety of actions, gestures, facial expressions, vocal tones, and more. Therefore, it makes sense to render this mechanism of symbolic language within a human communication process transparent enough to understand when and what kind of content can be exchanged via symbolic communication.

When attempting to explain this mechanism of symbolic communication, it becomes evident that certain preconditions must be made explicit in advance—without these, the subsequent explanation cannot function.

To encompass the broadest possible perspective on the symbolic communication occurring here, the author of this text adopts the term “philosophical perspective”—in the sense that it is intended to include all known and conceivable perspectives.

Three Isolated Perspectives (Within Philosophy)

In addition to the perspective of biology (along with many other supporting disciplines), which has been used to describe the development of the biological on planet Earth up to the Homo Sapiens Event (HSE), some additional perspectives will now be introduced. These perspectives, while grounded in the biological framework, can provide valuable insights:

Empirical Neuroscience: It is concerned with the description and analysis of observable processes in the human brain.

Phenomenology: A subdiscipline of both philosophy and psychology, it serves to describe and analyze subjective experiences.

Empirical Psychology: It focuses on the description and analysis of observable human behavior.

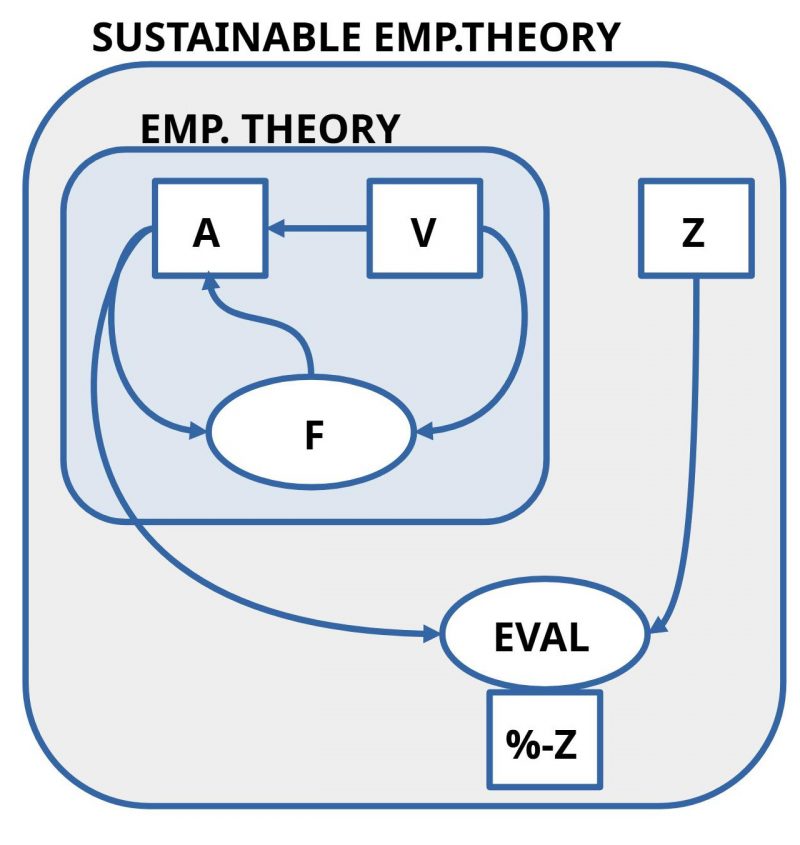

IMAGE 2: (Hand-drawn sketch, illustrating the developmental process) Philosophical Perspective with the subdisciplines ‘Phenomenology,’ ‘(Empirical) Psychology,’ and ‘Neuroscience’

If these three perspectives are arranged side by side, the phenomenological view includes only our own (subjective) experiences, without a direct connection to the body or the world outside the body. This is the perspective with which every human is born and which accompanies them throughout life as the “normal view of things.”

From the perspective of empirical psychology, observable behavior of humans is the central focus (other life forms can also be studied in this way, though this falls more under biology). However, the phenomena of subjective experience are not accessible within the framework of empirical psychology. While the observable properties of the brain as an empirical object, as well as those of the body, are in principle accessible to empirical psychology, the empirical properties of the brain are generally assigned to (empirical) neuroscience, and those of the body to (empirical) physiology.

From the perspective of (empirical) neuroscience, the observable properties of the brain are accessible, but not the phenomena of subjective experience or observable behavior (nor the observable properties of the body).

It becomes clear that in the chosen systematic approach to scientific perspectives, each discipline has its own distinct observational domain, which is completely separate from the observational domains of the other disciplines! This means that each of these three perspectives can develop views of its object that differ fundamentally from those of the others. Considering that all three perspectives deal with the same real object—concrete instances of Homo sapiens (HS)—one must ask: What status should we assign to these three fundamentally different perspectives, along with their partial representations of Homo sapiens? Must we, in the scientific view, divide one material object into three distinct readings of Homo sapiens (HS): the HS-Phenomenal, the HS-Behavioral, and the HS-Brain?

In scientific practice, researchers are, of course, aware that the contents of the individual observational perspectives interact with one another in some way. Science today knows that subjective experiences (Ph) strongly correlate with certain brain events (N). Similarly, it is known that certain behaviors (Vh) correlate both with subjective experiences (Ph) and with brain events (N). In order to at least observe these interactions between different domains (Ph-Vh, Ph-N, N-Vh), interdisciplinary collaborations have long been established, such as Neurophenomenology (N-Ph) and Neuropsychology (N-Vh). The relationship between psychology and phenomenology is less clear. Early psychology was heavily introspective and thus hardly distinguishable from pure phenomenology, while empirical psychology still struggles with theoretical clarity today. The term “phenomenological psychology (Ph-Vh)” appears occasionally, though without a clearly defined subject area.

While there are some interdisciplinary collaborations, a fully integrated perspective is still nowhere to be found.

The following section will attempt to present a sketch of the overall system, highlighting important subdomains and illustrating the key interactions between these areas.

Sketch of the Overall System

The following “sketch of the overall system” establishes a conceptual connection between the domains of subjective experiences (Ph), brain events (N), bodily events (BDY), the environment of the body (W), and the observable behavior (Vh) of the body in the world.

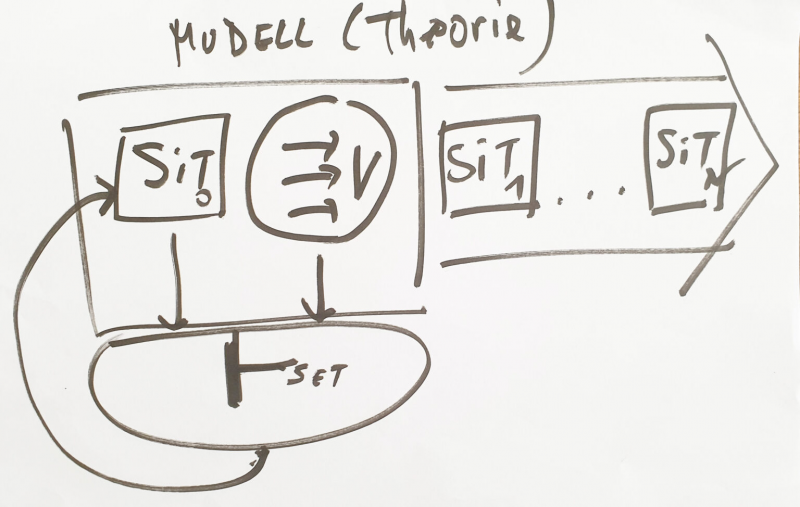

IMAGE 3: (Hand-drawn sketch, illustrating the developmental process) Depicting the following elements: (1) Subjective experiences (Ph), (2) Brain events (N), (3) Bodily events (BDY), (4) Observable behavior (Vh) of the body in the world, (5) The environment of the body (W). In the lower-left corner of the image, a concrete instance of Homo sapiens (HS) is indicated, observing the world (W) along with the various bodies (BDY) of other Homo sapiens individuals. This HS can document its observations in the form of a text, using language (L).

IMAGE 3b: Hand-drawn sketch, illustrating the developmental process – The core idea for the concept of ‘Contextual Consciousness (CCONSC)’

As can be seen, the different domains are numbered from (1) to (5), with number (1) assigned to the domain of subjective experiences (Ph). This is motivated by the fact that, due to the structure of the human body, we perceive ourselves and all other events in the form of such subjective experiences as phenomena. Where these phenomena originate—whether from the brain, the body, or the surrounding world—is not directly apparent from the phenomena themselves. They are our phenomena.

While philosophers like Kant—and all his contemporaries—were still limited to considering the possible world and themselves solely from the perspective of their own phenomena, empirical sciences since around 1900 have gradually uncovered the processes behind the phenomena, localized in the brain, allowing them to be examined more concretely. Over time, increasingly precise correlations in time between brain events (N) and subjective experiences (Ph) were discovered.

One significant breakthrough was the ability to establish a temporal relationship between subjective experiences (Ph) and brain events (N). This suggested that while our subjective experiences cannot be measured directly as experiences, their temporal relationships with brain events allow for the localization of specific areas in the brain whose functioning appears to be a prerequisite for our subjective experience. This also provided a first empirical concretization of the common concept of consciousness, which can be formulated as a working hypothesis:

What we refer to as consciousness (CONSC, 1) corresponds to subjective experiences (Ph) that are enabled by brain events (N) occurring in specific areas of the brain. How exactly this can be understood will be explained further below.

The brain events (N) localized in the brain (BRAIN, 2) form a complex event space that has been increasingly researched since around 1900. It is generally clear that this space is highly dynamic, manifesting in the fact that all events interact with each other in multiple ways. The brain is structurally distinct from the rest of the body, but at the same time, it maintains exchange processes with the body (BDY, 3) and the brain’s event space (BRAIN, 2). This exchange occurs via interfaces that can (i) translate body events into brain events and (ii) translate brain events into bodily events.

Examples of (i) include our sensory organs (eyes, ears, smell), which transform light, sound, or airborne molecules into brain events. Examples of (ii) include brain events that, for instance, activate muscles, leading to movements, or regulate glandular secretions, which influence bodily processes in various ways.

The body space (BODY, 4) is approximately 450 times larger than the space of brain events. It consists of multiple regions known as organs, which have complex internal structures and interact in diverse ways. Bodily events also maintain a complex exchange with brain events.

With the surrounding world (W,5), there are two types of exchange relationships. First, (i) interfaces where bodily events appear as excretions in the event space of the world (W), and second, (ii) bodily events that are directly controlled by brain events (e.g., in the case of movements). Together, these two forms of events constitute the OUTPUT (4a) of the body into the surrounding world (W). Conversely, there is also an INPUT (4b) from the world into the body’s event space. Here, we can distinguish between (i) events of the world that directly enter the body (e.g., nutrition intake) and (ii) events of the world that, through sensory interfaces of the body, are translated into brain events (e.g., seeing, hearing).

Given this setup, an important question arises:

How does the brain distinguish among the vast number of brain events (N)—whether an event is (i) an N originating from within the brain itself, (ii) an N originating from bodily events (BDY), or (iii) an N originating—via the body—from the external world (W)?

In other words: How can the brain recognize whether a given brain event (N) is (i) N from N, (ii) N from BDY, or (iii) N from W?

This question will be addressed further with a proposed working hypothesis.

Concept of ‘Consciousness’; Basic Assumptions

In the preceding section, an initial working hypothesis was proposed to characterize the concept of consciousness: what we refer to as consciousness (CONSC, 1) pertains to subjective experiences (Ph) that are enabled by brain events (N) occurring in specific regions of the brain.

This working hypothesis will now be refined by introducing additional assumptions. While all of these assumptions are based on scientific and philosophical knowledge, which are supported by various forms of justification, many details remain unresolved, and a fully integrated theory is still lacking. The following additional assumptions apply:

- Normally, all phenomena that we can explicitly experience subjectively are classified as part of explicit consciousness (ECONSC ⊆ CONSC). We then say that we are aware of something.

- However, there is also a consciousness of something that is not directly correlated with any explicit phenomenon. These are situations in which we assume relationships between phenomena, even though these relationships themselves are not experienced as phenomena. Examples include:

- Spoken sounds that refer to phenomena,

- Comparative size relations between phenomena,

- Partial properties of a phenomenon,

- The relationship between current and remembered phenomena,

- The relationship between perceived and remembered phenomena.

This form of consciousness that exists in the context of phenomena but is not itself a phenomenon will be referred to here as contextual consciousness (CCONSC ⊆ CONSC). Here, too, we can say that we are aware of something, but in a somewhat different manner.

- This distinction between explicit consciousness (ECONSC) and contextual consciousness (CCONSC) suggests that the ability to be aware of something is broader than what explicit consciousness alone implies. This leads to the working hypothesis that what we intuitively call consciousness (CONSC) is the result of the way our brain operates.

Basic Assumptions on the Relationship Between Brain Events and Consciousness

Given today’s neuroscientific findings, the brain appears as an exceedingly complex system. For the considerations in this text, the following highly simplified working hypotheses are formulated:

- Empirical brain events are primarily generated and processed by specialized cells called neurons (N). A neuron can register events from many other neurons and generate exactly one event, which can then be transmitted to many other neurons. This output event can also be fed back as an input event to the generating neuron (direct feedback loops). Time and intensity also play a role in the generation and transmission of events.

- The arrangement of neurons is both serial (an event can be transmitted from one neuron to the next, and so on, with modifications occurring along the way) and hierarchical (layers exist in which events from lower layers can be represented in a compressed or abstracted form in higher layers).

From this, the basic assumptions about the relationship between brain events and conscious events are as follows:

- Some brain events become explicitly conscious phenomena (ECONSC).

- Contextual consciousness (CCONSC) occurs when a network of neurons represents a relationship between different units. The relationship itself is then consciously known, but since a relationship is not an object (not an explicit phenomenon), we can know these relationships, but they do not appear explicitly as phenomena (e.g., the explicit phenomenon of a “red car” in text and the perceptual object of a “red car”—we can know the relationship between them, but it is not explicitly given).

- The concept of consciousness (CONSC) thus consists at least of explicit phenomenal consciousness (ECONSC) and contextual consciousness (CCONSC). A more detailed analysis of both the phenomenal space (Ph) and the working processes of the brain (N) as the domain of all brain events will allow for further differentiation of these working hypotheses.

After these preliminary considerations regarding the different event spaces in which a Homo sapiens (HS) can participate through different access modalities (W – BDY – N(CONSC)), the following section will provide an initial sketch of the role of language (L) (with further elaborations to follow).

Descriptive Texts

As previously indicated, within each of the listed observational perspectives—observable behavior (Vh), subjective experiences (Ph), and brain events (N) (see IMAGE 2)—texts are created through which actors exchange their individual views. Naturally, these texts must be formulated in a language that all participants can understand and actively use.

Unlike everyday language, modern scientific discourse imposes minimal requirements on these texts. Some of these requirements can be described as follows:

- For all linguistic expressions that refer to observable events within the domain of a given perspective, it must be clear how their empirical reference to a real object can be verified intersubjectively. In the verification process, it must be possible to determine at least one of the following: (i) It applies (is true), (ii) It does not apply (is false), (iii) A decision is not possible (undetermined)

- It must also be clear: (i) Which linguistic expressions are not empirical but abstract, (ii) How these abstract expressions relate to other abstract expressions or empirical expressions, (iii) To what extent expressions that are themselves not empirical can still be evaluated in terms of truth or falsehood through their relationships to other expressions

How these requirements are practically implemented remains, in principle, open—as long as they function effectively among all participating actors.

While these requirements can, in principle, be fulfilled within the perspective of empirical psychology and neuroscience, a phenomenological perspective cannot fully meet at least the first requirement, since the subjective phenomena of an individual actor cannot be observed by other actors. This is only possible—and even then, only partially—through indirect means.

For example, if there is a subjective phenomenon (such as an optical stimulus, a smell, or a sound) that correlates with something another actor can also perceive, then one could say: “I see a red light,” and the other actor can assume that the speaker is seeing something similar to what they themselves are seeing.

However, if someone says, “I have a toothache,” the situation becomes more difficult—because the other person may never have experienced toothache before and therefore does not fully understand what the speaker means. With the vast range of bodily sensations, emotions, dreams, and other subjective states, it becomes increasingly challenging to synchronize perceptual content.

The Asymmetry Between Empirical and Non-Empirical Perspectives

This indicates a certain asymmetry between empirical and non-empirical perspectives. Using the example of empirical psychology and neuroscience, we can demonstrate that we can engage empirically with the reality surrounding us—yet, as actors, we remain irreversibly anchored in a phenomenological (subjective) perspective.

The key question arises: How can we realize a transition from the inherently built-in phenomenological perspective to an empirical perspective?

Where is the missing link? What constitutes the possible connection that we cannot directly perceive?

Referring to IMAGE 3, this question can be translated into the following format: How can the brain recognize whether a given brain event (N) originates from

(i) another brain event (N from N),

(ii) a bodily event (N from BDY),

(iii) an external world event (N from W)?

This question will be explored further in the following sections.

Outlook

The following text will provide a more detailed explanation of the functioning of symbolic language, particularly in close cooperation with thinking. It will also illustrate that individual intelligence unfolds its true power only in the context of collective human communication and cooperation.